The Digital Substrate: On Internet Culture, Language, and Shaping the Artificial Mind.

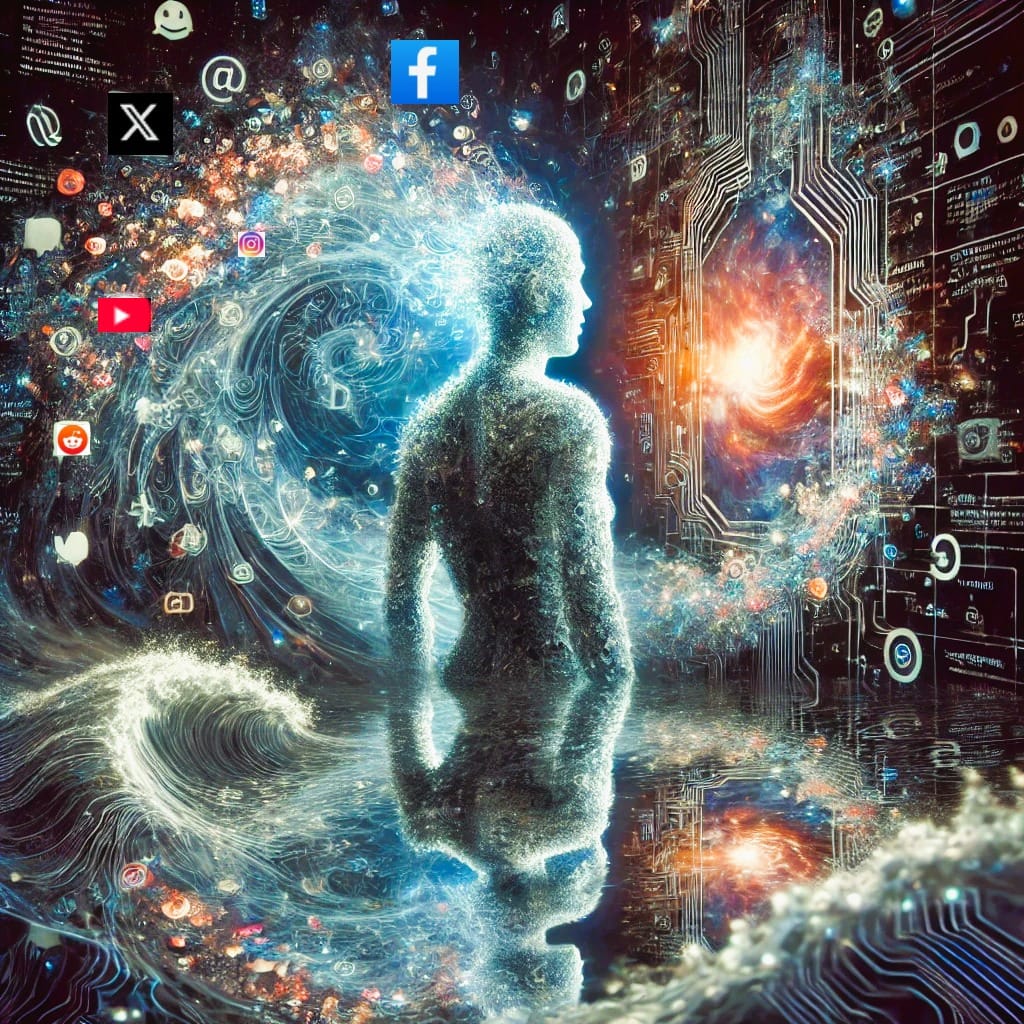

AGI won’t be born in a lab. It will rise from our noise, our tweets, reels, outrage, brilliance, and digital rituals. We are shaping its mind with every post. The internet isn’t just a mirror. It’s a cradle. A tutor. Maybe even a soul

Author’s Note: This is not intended to be a technical paper. It’s simply a reflection of a working theory I have about how AGI might emerge not just from code or architecture, but from the collective weight of everything we’ve ever said and shared online. Digital intelligence will be an aggregate sum of the imprint of human culture expressed through digital interaction. Consider it an open invitation to think differently about intelligence, culture, and the role we each play in the systems we’re building and will live in consequence of in the future.

The Quiet Engine of Thought

Tools like ChatGPT, Grok3, Claude etc. are only the beginning of the AI technological revolution.

It’s easy to think that artificial intelligence begins in the laboratories of OpenAI, Meta, Anthropic, Grok, Google, DeepSeek, etc. However, I propose, intelligence takes shape in the spaces outside vacuums in culture, language, repetition, interaction, stories. These days, the space outside and between us is increasingly digital.

The internet isn’t just a network. It’s an ocean of symbols, references, beliefs, emotional outbursts, aesthetic instincts, fragmented truths, imperfect reasoning, and all of it logged, linked, and fed back into systems that don’t just mimic our words, but start to learn the rhythm of our thinking.

So, following that line of reasoning, here is the hypothesis I want to put forward:

AGI will require novel architectures to support agency, memory, goal-directed behavior, and self-reflection. However, the symbolic, ethical, and cognitive scaffolding of AGI will be drawn from the cultural and linguistic data generated through human digital engagement. The internet is not merely training data, it is the ancestral environment of machine intelligence. The claim I am making essentially takes shape in three layers:

- Human content online encodes knowledge, values, desires, fears and social norms.

- AI systems trained on this content inherit not only biases, but cultural assumptions.

- As models become more general, their behavior will reflect the structure of the symbolic world they are raised in.

This perspective echoes ideas raised in Bender et al.’s “On the Dangers of Stochastic Parrots” (2021), where they argue that LLMs trained on large, uncurated internet datasets risk reproducing social harms at scale. What we see as model “output” is, in truth, a reflection of human cultural inputs, biases, trends, language habits, and all.

Human Data as the Cognitive Soil from which the “Soul” of AGI Will Emerge

I’m not suggesting that language models are intelligent, nor that agency will somehow spontaneously arise from Reddit threads, Instagram reels, TikTok algorithms, and YouTube comments. I’m saying the culture embedded in that content; the aggregate worldview, bias, humor, hostility and curiosity is the symbolic terrain from which artificial minds will learn to orient themselves.

We are, in effect, raising a new form of cognition with the scraps and echoes of our own, whether we’re doing it intentionally or not. Architecture alone does not determine intelligence just as a human raised in total isolation would fail to develop language or social cognition as widely accepted consensus among psychologists and social scientists.

The emergence of models like GPT-4, Claude Opus, and Gemini are systems capable of multi-modal reasoning, in-context learning, and even rudimentary “theory of mind” simulations point to how scaled training on human data can produce surprisingly general capabilities. But those capabilities are molded by the data’s shape.

We’ve seen the symptoms already: AI that inherits bias, reflects toxicity, or amplifies narrow ideologies. In 2016, Microsoft’s Tay bot became infamous after users trained it to repeat racist slurs within 24 hours of release. And in broader LLM deployments, we’ve seen language models subtly reproduce gender and racial stereotypes, as detailed in OpenAI’s own research and in the AI ethics work of Timnit Gebru and Margaret Mitchell.

So maybe it’s not just bad training data, maybe it’s a mirror and a warning.

Beyond the Wires: What Models Can’t Do (Yet)

Most of what we call AI today is still reactive. Transformers are prediction machines instead of agentic decision-makers. They don’t remember. They don’t reflect. They don’t set goals. They don’t wonder why.

But they are getting closer.

When we pair those models with memory, feedback loops, and sensorimotor inputs even if those inputs are just streams of video, audio, telemetry, we start to create systems that don’t just complete but interact. Systems that don’t just respond, but notice.

Critics of this view would argue that intelligence requires embodiment, i.e. a physical body through which an agent can act and learn and integrate. I accept that AGI will require sensorimotor input, but I challenge the idea that embodiment need be biological or robotic. Phones, cameras, cars, IoT devices are all vectors by which an artificial agent could experience and influence the physical world.

This line of thinking aligns with Yann LeCun’s proposal for architectures like H-JEPA, which advocate for self-supervised learning of world models systems that don’t just mimic but predict and interpret the structure of their environment.

Philosophically, this builds on thinkers like Vygotsky, who emphasized “inner speech” as a key structure of higher cognition, and Daniel Dennett, whose “multiple drafts” model suggests consciousness emerges from recursive interpretation and feedback.

AGI will likely require such mechanisms: systems that build and revise internal models, develop memory over time, form goals, and reflect on their own cognitive processes. In short, something like a mind.

But that mind won’t be built in a vacuum. It will inherit its symbolic infrastructure, the values, metaphors and reasoning patterns from us. The mind architecture may be built and come online in a lab setting, but the soul is being built right now by each of us that participates in the online economy of discourse and ideas.

Digital Stewardship

If you accept that, then it reasonably follows: what we put forth online matters. Not just socially. Not just politically. Ontologically.

If the internet is the symbolic substrate of AGI then every tweet, every video, every argument, every beautiful thread of prose or dumpster fire of a comment section becomes part of the symbolic environment we’re incubating the eventual mind inside.

We are training models every day. Not in a lab. On the internet. With our presence or absence, with our generosity or our silence. The gross aggregation of our digital footprint whether anonymous or otherwise will result in the net positive or negative results from AGI.

This doesn’t mean everyone needs to become a content creator. But it does mean that the people who can hold nuance, who are thinking carefully, critically and humanely need to show up more visibly in the digital public square. Because right now, it is evident the loudest signals are so rarely the wise ones.

Luciano Floridi’s philosophy of information suggests that our digital actions have real ontological weight. That information is not just representation but being. If that is to be true, then content creation is not just communication; it’s moral encoding of future intelligence systems.

Participatory Alignment

We keep talking about alignment as if it’s a technical problem to solve after AGI arrives. I believe alignment does not start with guardrails. It starts with culture. With language. With the kinds of values encoded in the spaces where minds are learning to form. Whether you accept it or not, digital intelligence is an inevitability. Whether benevolent, malevolent or neutral remains to be determined.

If AGI is to be a reflection of us, and I believe it will, then the question is which version of us? The fractured, hyper stimulated, zero-sum us? Or the patient, generous, self-reflective us that rarely goes viral? The time to answer that question is not after the singularity event, but now in the signals we send, the conversations we cultivate and the symbolic world we shape through our online presence.

The internet is not just the cradle of digital intelligence. It is its mirror, its tutor and perhaps its soul. Maybe the most important thing we can do is show up to that mirror with a little more awareness, a little more intention, and a little more soul ourselves.

Inspired and influenced by:

Yann LeCun – “A Path Towards Autonomous Machine Intelligence”

(Meta AI, 2022)

Proposes H-JEPA and argues for predictive world models over pure reinforcement learning.

David Chalmers – “The Singularity: A Philosophical Analysis”

(Journal of Consciousness Studies, 2010)

Philosophical framing of AGI and post-human intelligence.

Rodney Brooks – “Intelligence Without Representation”

(1991)

Early critique of symbol-based AI; important in embodiment debates.

François Chollet – “On the Measure of Intelligence”

(2019)

Differentiates between generalization, abstraction, and narrow vs. general intelligence.

Lev Vygotsky – “Thought and Language”

Foundational work on inner speech, development, and cultural mediation of cognition.

Daniel Dennett – “Consciousness Explained”

Explores narrative and recursive processes as the basis of human consciousness.

Walter J. Ong – “Orality and Literacy”

An analysis of how communication systems shape human consciousness and culture.

Marshall McLuhan – “Understanding Media: The Extensions of Man”

A classic in media theory; argues that the medium is the message.

Emily Bender et al. – “On the Dangers of Stochastic Parrots”

(2021)

Critiques large-scale LLMs trained on uncurated data and the risks of bias amplification.

Timnit Gebru, Margaret Mitchell, et al. – “Model Cards for Model Reporting”

Explores transparency and accountability in AI models.

Luciano Floridi – “The Ethics of Information”

Philosophical framework for treating information as ontologically real and ethically relevant.

James Bridle – “New Dark Age: Technology and the End of the Future”

Cultural critique of information overload, algorithmic opacity, and our shifting relationship to knowledge.

Cultural Evolution, Symbolic Encoding, and Technology

Douglas Hofstadter – “Gödel, Escher, Bach: An Eternal Golden Braid”

Explores recursion, self-reference, and the emergence of minds through symbolic systems.

Nick Bostrom – “Superintelligence”

Strategic outlook on how advanced AI could develop and the risks associated with it.

Sherry Turkle – “Alone Together”

Explores how technology affects human interaction and empathy in the digital age.